There’s been some ongoing discussions in discord around bot activity on the side chain. While any bot activity violates the Rally Terms of Service, the community is shouldered with the responsibility to determine a course of action here. I think it is generally accepted that bots are active on the side-chain, and feel free to post evidence here if anyone remains skeptical.

-

What actions are bots taking on the side chain?

Buying: The automated buying of new coins is allowing the bot accounts to purchase new creator coins ahead of fans. The latest coin launch of $UBR coin saw maybe 40 purchases in the first minute of launch. How many of those purchases were from suspect accounts?

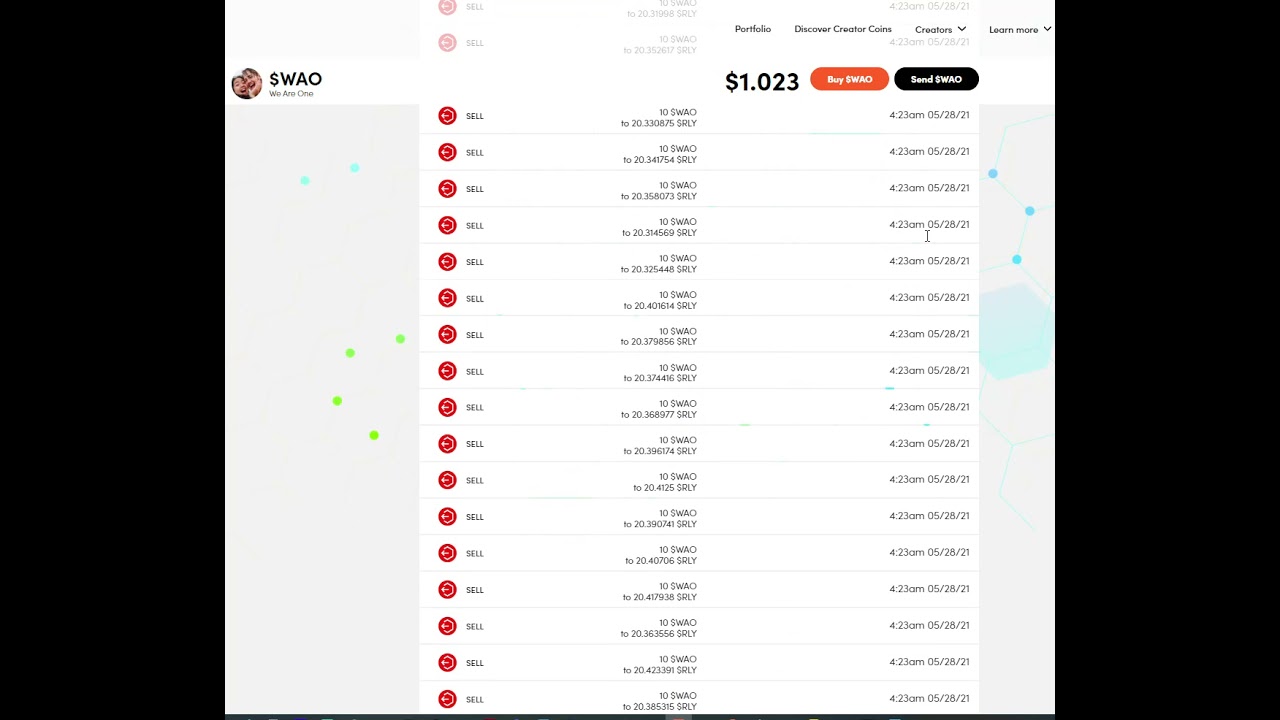

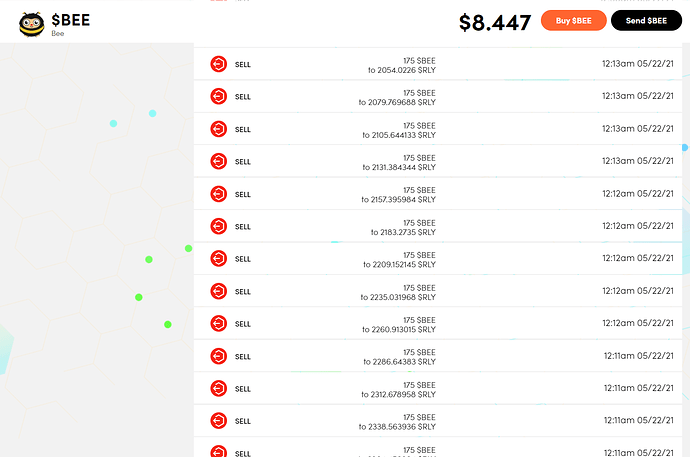

Selling: Automated selling up to the flow control limits is readily apparent with each new coin launch. This, to the point where the selling on some coins was happening up to the minute the site was down for recent backend work, and then the selling picked right back up as soon as the site was live. -

Which actions are the most detrimental (highest priority to go after)? While both are a bad look, I think implementing protections to limit the bots lining up for creator coin launches will limit the later selling behavior even if human “token jumpers” take the bot place to some extent, it will at least provide true fans a better chance to compete.

-

What measures are can we take to mitigate bot activity and disincentivize further bot activity. Someone more tech savvy should jump in here. I would think a captcha somewhere in the process might help here. Extra steps in the purchase flow have their downside, but perhaps we could implement this only for Rally conversions vs. credit card purchases and only on day of launch.

We can certainly go further and take action against those accounts. A warning that an account appears to be violating the terms of service, or an account freeze would seem like reasonable places to start.

- What can we reasonably ask of the core team to implement? I think it would be incredibly valuable for the core team to weigh in on this issue - what they’ve seen with their back end data, and what measures they think would be effective here. Also, why they might see this as a priority or not. It’s likely the team has already taken action against some accounts for issues relating to fraud and bot activity, but I don’t think there’ve been updates to the community on this. And to be fair, requests from the community for more insight on this issue.

The creator council has discussed this issue, and some have expressed an uneasiness in allowing creators to launch in an environment that seem to have a proliferation of bots that are being allowed first access to creator economy. Delphi is also looking at this issue as part of their mandate with the recently approved proposal.

Please add on to this discussion, as I’m very curious what the community would like to do here, and how we can use guidance from the core team on the options available to us to then move this towards a proposal to strengthen the network.

Cheers,

Grand